The unexpected complexity of data connectors

A connector is a program that fetches data from an external system to bring it into your system. How hard can it be to make one? By that definition, the following command line is already a connector:

It uses the Github API to retrieve the list of Sinequa’s public repositories and stores that information in a JSON file. In certain conditions, it would be perfectly fine to use this simple command line in a project: It is simple, reliable, and easy to understand, modify and maintain.

However, in general, this “connector” would be lacking a lot of capabilities. These requirements are easy to miss at the beginning of a project, but they may be critical.

Let’s take a look at these missing capabilities:

Configuration Management

In the example above, we fetch Sinequa’s list of repositories and store the response as-is. But say we want to fetch any user’s list of repositories and perhaps only store a specific subset of the response. You can address these needs by adding parameters to your connectors and letting a user configure these parameters before running them. But this entails other problems:

- The number of parameters can proliferate. Just take a look at the length of the curl manual (curl is the program from our command line above). The more parameters, the more overwhelming it will be for a user to configure or for a new user to understand what the previous user did. Complexity does not scale well: A connector with ten parameters might be perfect initially but become incomprehensible with a hundred of them.

- Users generally do not want to edit command-line arguments or raw files. They prefer a friendly User Interface with a clean list of parameters, tooltips, autocompletion, and form validation. So, you will need to build a system to store these parameters and an app to modify them. You might also want to store the history of these parameters in case someone changes something and breaks the connector.

Connectors integration

Beyond configuration management, connectors are generally part of a more extensive, more complex system. Connectors may be launched by one process and consumed by another, while other processes may be in place to schedule and monitor the others’ executions, or collect logs to enable troubleshooting. The connector configuration might also be coupled to the more extensive system (for example: which database should the connector feed).

They are also generally not alone: If you need to connect to multiple external systems, then your connectors will probably share certain functionality and parameters (eg. “Ignore these file extensions” or “Use that SSO system to authenticate”, etc.). This means that they will share common parts, while others will be specific.

In other words, connectors do not exist in a vacuum: A standalone prototype may work fine on its own, but it will need to obey several conventions and protocols, to be integrated in the more extensive system.

As a consequence, connectors are not a commodity. It is generally not possible to take a connector from Github and use it in your project. It is also challenging to subcontract the development of connectors to independent developers who —although very good— might not know well the larger context and produce inconsistent software.

Connectors customization

Our one-line connector is hidden behind a friendly user interface letting users control anything it does. However, configuration has its limits: Even with hundreds of parameters, unforeseen needs always arise at some point in the project.

The solution to this problem is to expose some “hooks” at different stages of the connector execution (for example, initialization and data post-processing), letting users customize the connector’s behavior with some ad hoc code or commands.

For example, external systems generally require some authentication. You may need to write code to authenticate with a Single-Sign-On system providing you with a token. This code could be “plugged in” the initialization of a connector to allow the token to be passed with each request made by the connector.

Hooks can be very powerful, but exposing a set of APIs to users has some consequences you must be aware of:

- APIs can be used maliciously. Users or third parties can now access potentially sensitive data programmatically. This may be acceptable, as long as you are aware of it, and the necessary security measures are put in place.

- APIs can be misused unintentionally, and now you will need to argue with users to figure out if a bug comes from your connector or their customization.

- Once an API exists (and is used), you cannot just break it. In fact, you will forever live with the anxiety of breaking someone’s customization whenever you touch the code of your connector.

Automation & Performance

In general, end users prefer to visualize the most recent data in their system. This means you will want to run your connector regularly or even as frequently as possible. But this raises various interesting questions:

- If your connector runs often, it will process almost the same data every time. This is probably very inefficient. So, is there a way to know whether you have already processed the data and can safely ignore it?

- How can you determine that data has been modified? Can you trust a “modified date” metadata, or do you have to compare the content piece by piece?

- If some data is “missing” from the data source, should you remove it from your system as well? Or could it be a bug in the external system?

- If users have somehow modified the data in your system, what do you do about it when the source data has also been updated? It is probably not ok to overwrite the changes.

- If your connector keeps bringing in new data, is there a risk it could clog up your system? If the processes that consume the data are not fast enough, can the connector wait for them?

- Conversely, your connector may make too many requests to the external data source. Can you slow it down to avoid effectively DDoSing that external system?

- On the other hand, if your connector is slower than the other parts and is effectively the system’s bottleneck, can you make it more efficient? Can you make the connector multi-threaded or even distributed over multiple servers? Note that modern cloud infrastructures like Azure allow you to create “elastic” systems that will automatically scale the amount of hardware in conjunction with the system load.

- What should you do about errors? Connectors run into many different types of errors: Some that can be safely ignored, some that mean that the original source has a problem, some that should stop the connector from running immediately. But then, can you start your connector back from when it failed?

Security

Very often, connectors will retrieve sensitive data, protected by strict access rights.

The first consequence is that your connector will need privileged access to the original data source. For example, a “service account” will be created with special rights allowing the connector to see everything.

This is generally not a problem at the prototyping stage because you can test your connector with fake or non-sensitive data. But at the production stage, the people in charge of the original data source will need to entrust you, your connector, and the more extensive system around it with what is essentially a “key to their house”. Your connector better be well-secured and not break anything in the source system, or else their owner will never let you in again!

A second consequence is that the security of the original system should be “propagated” into your system. Therefore, your connector should retrieve data and the security associated with that data, and your system should enforce that security when users try to access it. This is challenging when you have multiple connectors and data sources, because they may be using different types of user identities and various types of security models. Reconciling these differences can be difficult technically, especially when considering that the list of users and their access rights are constantly evolving within a large organization.

This is challenging when you have multiple connectors and data sources, because they may be using different types of user identities and various types of security models. Reconciling these differences can be difficult technically, especially when considering that the list of users and their access rights are constantly evolving within a large organization.

The Long Game

A connector may not be the most sophisticated part of your system, but it should be one of the most robust and reliable. The difficulty is that even if the connector itself is reliable, the external system might not be. What if the service account is revoked? What if a software update breaks an API you rely on? What if the authentication system changes the protocol you were using?

The difficulty is that even if the connector itself is reliable, the external system might not be. What if the service account is revoked? What if a software update breaks an API you rely on? What if the authentication system changes the protocol you were using?

In software development, automated testing detects problems before the software goes into production. But with connectors, you cannot just test your software. You also have to test the software you are connected to.

Whenever the target system ships a major software update, you will probably have to ship a major software update as well. Effectively, the evolution of the software you are connected to will dictate the amount of effort you must put in your own software.

Conclusion

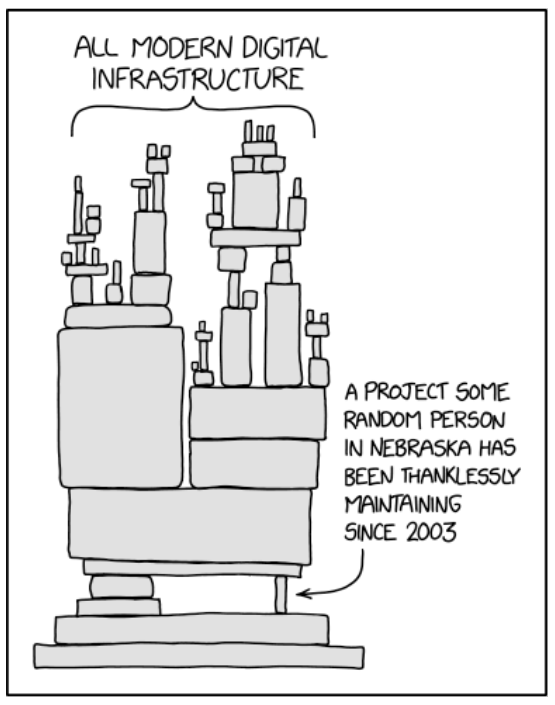

Taken individually, these capabilities are all manageable, and there exist open-source libraries and software vendors to help along the way. However, integrating them all into a consistent system and maintaining them for years is difficult. Software can easily be more complex than the sum of its parts, especially when some parts were not anticipated from the start.

Assistant

Assistant